The Ambisonics Workflow

Basic Workflow

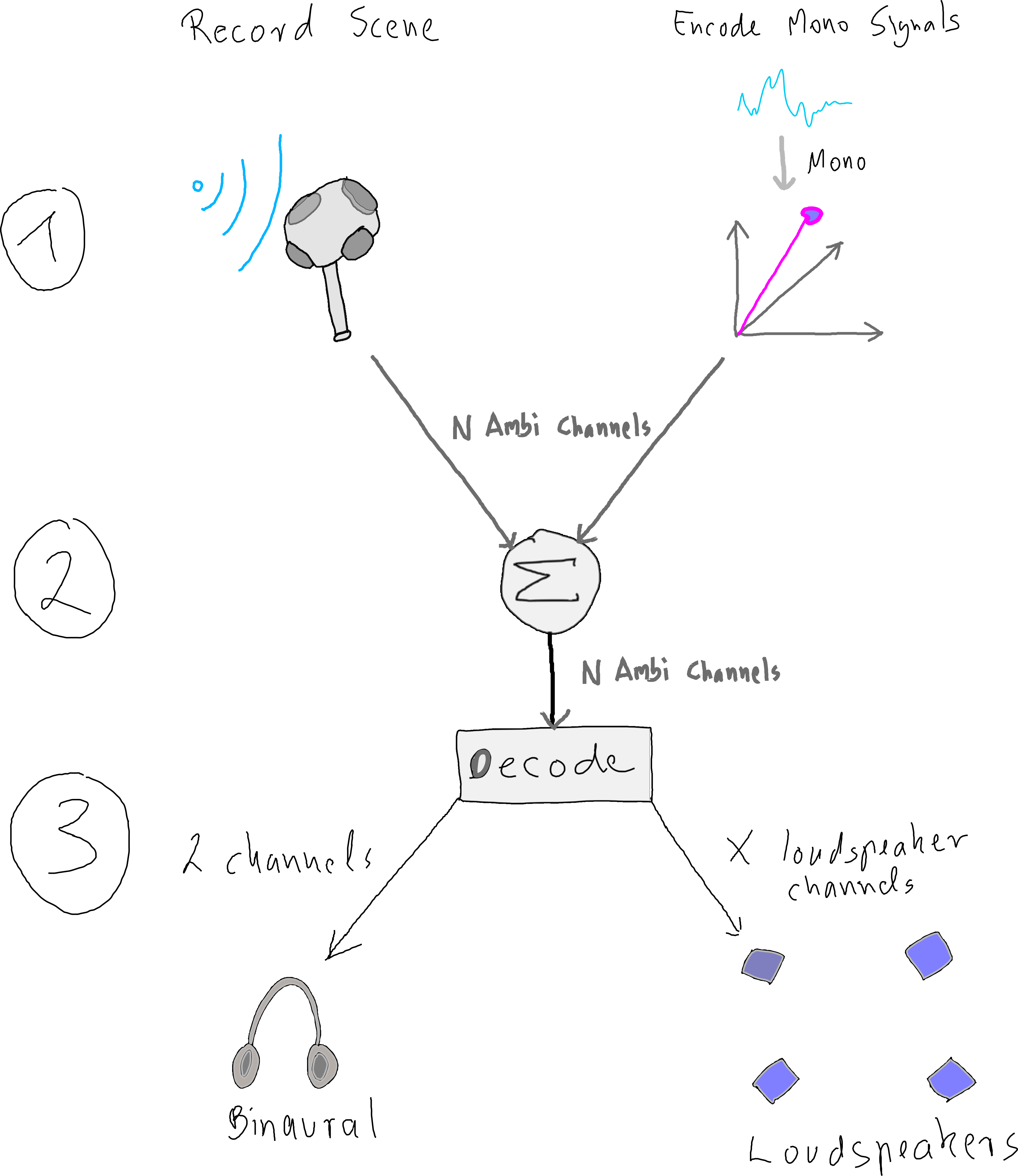

A basic Ambisonics production workflow can be split into three stages, as shown in Figure 1. The advantage of this procedure ist that the production is independent of the output format, since the intermediate format is in the Ambisonics domain. A sound field produced in this way can subsequently be rendered or decoded to any desired loudspeaker setup or headphones.

Figure 1: Basic Ambisonics production workflow.

Stages

1: Encoding Stage

In the encoding stage, Ambisonics signals are generated. This can happen via recording with an Ambisonics microphone or through encoding of mono sources with individual angles (azimuth, elevation). A plain Ambisonics encoding does not include distance information - altough it can be added through attenuation. All encoded signals have the same amount of $N$ ambisonics channels.

2: Summation Stage

All individual Ambisonics signals can be summed up to create one scene, respectively one sound field.

3: Decoding Stage

In the decoding stage, individual output signals can be calculated. This requires either head-related transfer functions or loudspeaker coordinates.

More advanced workflows may feaure additional stages for manipulating encoded Ambisonics signals, inlcuding directional filtering or rotation of the audio scene.

References

2015

- Matthias Frank, Franz Zotter, and Alois Sontacchi.

Producing 3d audio in ambisonics.

In Audio Engineering Society Conference: 57th International Conference: The Future of Audio Entertainment Technology–Cinema, Television and the Internet. Audio Engineering Society, 2015.

[details] [BibTeX▼]

2009

- Frank Melchior, Andreas Gräfe, and Andreas Partzsch.

Spatial audio authoring for ambisonics reproduction.

In Proc. of the Ambisonics Symposium. 2009.

[details] [BibTeX▼]